The Problem

If you've worked with coding agents extensively, then you've probably noticed this pattern: at the start of each session, they act like you're meeting for the first time.

They don't:

- remember anything about your project,

- know why things were built a certain way,

- apply corrections from Tuesday's session to Friday's work,

- or build a usable past that can inform future decisions.

Every session starts from scratch. The agent must be onboarded every time, and you're the one filling in the gaps.

In this article, I'll demonstrate why memory is an essential component for overcoming this deficiency, and outline the mechanisms necessary to make memory function for agents.

Brute Force Doesn't Scale

To be fair, you don't need to give your agents memory. You can brute force good outcomes. Retry loops, corrective prompts, task reframing, and ever-growing instruction files can get you there... eventually.

But is that our aim?

It's expensive, unreliable, and it introduces context rot.

Context rot is what happens when a growing context window has diminishing influence on the model's output. Relevant signals remain present, just buried and unused. (Chroma Research)

It's also simplistic if we use correctness as the single measure of success. Unnecessary turns cost time, money, and most importantly, patience that we should place a premium on.

We shouldn't be aiming for eventual success. The target should be continuity and determinishtic1, repeatable outcomes at minimal cost.

1 LLMs are probabilistic by nature. Perfect determinism is not achievable. The best we can hope for are reasonable approximations.

What Is Memory?

If we want to fix the missing memory problem, then we need to understand what memory is and how it works. We all understand memory intuitively, but the mechanism is more complex than it seems. Let's break it down...

Memory is the encoding, storage, and retrieval of information when needed.

There are three main types:

Short-term Memory

This is sensory information that is maintained for about thirty seconds while the brain determines whether it's relevant enough to encode and store. I mention it here for completeness, but it won't come up again. It's not where agents struggle.

Long-term Memory

This is information that is encoded, stored and made retrievable over long periods of time. There are two subtypes that are important to distinguish: declarative (facts and events) and non-declarative (skills, habits, priming).

Working Memory

This one is very important, because it is the brain's workspace, and is what makes accomplishing tasks possible. It manipulates information and depends heavily on long-term memory. Without working memory, you can know things but you can't apply them.

Disclaimer: The concepts presented here are watered down from cognitive neuroscience research. The brain is complex and there are many nuances and subtypes of memory. What is presented here is known to be true, and sufficient for the purpose of this article. A list of sources is included at the end for anyone who wants to dive deeper.

The Tools of Working Memory

So, working memory is what makes accomplishing tasks possible, and long-term declarative memories are essential to its function. But, we're not done yet.

You also need control processes, which must also be in place to determine what information is relevant to the current goal and filter out what isn't.

There are many models of control processes that aid in the function of working memory. Here are those that concern us:

- Central executive

- This is the control center of working memory. It coordinates attention, selects goals, and determines what information is relevant to the task at hand.

- Top-down processing

- This is the ability to draw on prior knowledge to synthesize and make sense of new information. It allows us to fill in gaps, make inferences, and apply what we know to new situations.

- Episodic buffer

- This is a temporary storage system that binds together information from different sources into a coherent working state. It allows us to integrate information from long-term memory, sensory input, and other cognitive processes.

You can see that working memory is not one mechanism. It emerges from these processes working together to retrieve, filter, and bind information in service of a goal.

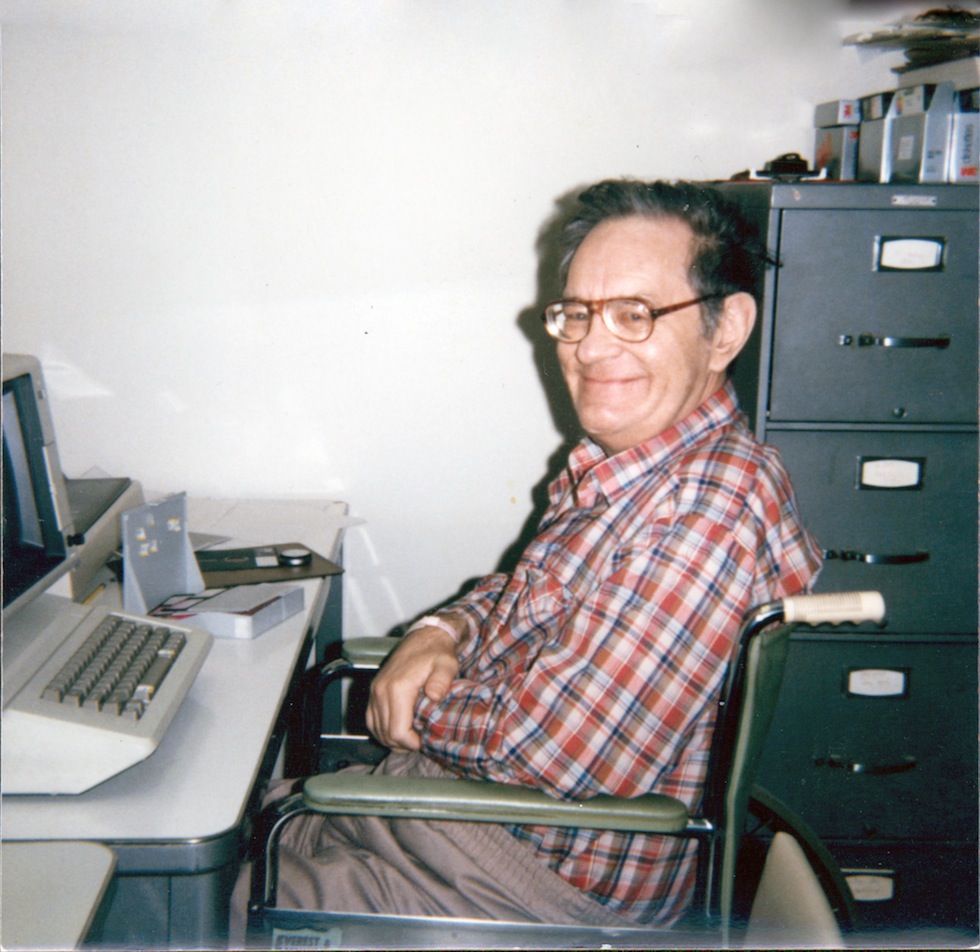

The Case of Henry Molaison

This is Henry Molaison. At the age of 27, he had parts of his temporal lobes, and large parts of his hippocampus, removed to treat severe epilepsy.

After the operation, his epilepsy remained, but he lost the ability to form new long-term declarative memories. All of his existing memories were intact. Interestingly, he retained the ability to learn new skills, but he couldn't reliably encode new declarative memories.

He could act, but couldn't recall facts or events that had occurred after his surgery. Every encounter, every task started from zero — no accumulated context, no prior knowledge to draw on.

Henry was capable, but perpetually starting over.

Sound familiar?

An agent can perform the task in front of it. What is missing is not capability, but declarative continuity across sessions: what was decided, why it was decided, what constraints exist, and what matters to subsequent goals.

Agent Amnesia

In that sense, coding agents are a lot like Henry.

Their long-term memory is limited to the data they were trained on and the non-declarative memories you provide them via SKILL.md and AGENTS.md. But they don't reliably accumulate knowledge or retrieve it later when it's needed, because they lack structured declarative memory for facts and events, and control processes to employ those memories effectively.

Agent amnesia is real.

The Missing Primitive

We now understand that declarative memory is necessary for working memory to function, and that it is insufficient on its own. This is no different for agents.

We can't just supply an agent with a knowledge graph and call it done. We'd still be missing the control processes that make working memory function. Those processes are the "when needed" part of the definition of memory. Without them, the agent is left to its own devices and is prone to bloat its context window.

There needs to be a system for retrieving stored information, deciding what matters, filtering out the rest, assembling it, and delivering it into working memory.

Finally, you need something that all these pieces organize around. That is a goal — the very thing these processes are meant to serve.

Why Do I Need a System?

Strictly speaking, you don't need a system. Here are two common approaches in practice today:

1. Manual curation

You maintain a library of markdown files for every invariant, guideline, component, constraint and decision, then selectively paste what's relevant into a prompt before each session.

That's a lot of energy and attention that could be better spent reading the code your agent writes before you ship it.

2. The dump

Every correction, every rule, every note from the history of the project gets dumped in your AGENTS.md file. But a correction from one context isn't universal, and irrelevant context distracts more than it guides.

Christopher Meiklejohn describes where that path leads: repeated regressions, emergency fixes, and a CLAUDE.md file that keeps growing as each new failure leaves behind another rule. The document becomes a record of incidents, not a reliable mechanism for changing the agent's behavior.

One approach depends on repeated manual assembly, risks omission, and pulls focus away from the work itself. The other hoards context until relevance collapses. What both lack is an automated system that retrieves and serves the right context at the right time. The advantage is not that the system stores more information, but that it shifts retrieval and scoping from repeated manual effort into a reusable goal-driven process.

System Design

If we want a system, we can map it directly from the cognitive model:

| Cognitive Need | System Implementation |

|---|---|

| Non-declarative memory | Reusable operating instructions and protocols (SKILL.md, AGENTS.md) |

| Declarative memory | Structured memory store for facts, events and relations |

| Binding mechanism | Goal entity and relation graph |

| Episodic buffer | Goal-scoped context assembler |

| Central executive | Goal orchestration layer (backlog, state model, and routing rules) |

| Top-down processing | Goal-driven retrieval, prioritization, and relevance filtering |

These mappings describe functional responsibilities, not exclusive actors. Depending on the implementation, the developer, the orchestration layer, and one or more agents may share these responsibilities across different phases.

In total, you get a goal-driven memory system that serves the right context at the right time, and a reliable starting point for action.

From Goal to Action: A Memory Cycle

This isn't just a collection of parts — it's a functional cycle centered on a goal. I recommend each phase runs in its own session:

1. Define

A goal is described. This is just an objective, success criteria and scope. Nothing more. Minimalism is key here. That and impeccable wording.

This is also where you want most of your focus to be as the developer. It's how you maintiain continuity. You want to spend the majority of your time here thinking about what you're building — not constantly onboarding agents.

2. Refine

Relevant long-term memories are retrieved and bound to the goal as relations: invariants, guidelines, components, decisions, and dependencies. Irrelevant information should also be filtered out in this phase, so it doesn't rot the context or distract the agent.

The result is a context packet filtered and scoped to the goal. This is top-down processing, long-term memory retrieval, and the episodic buffer working together.

The job of this phase is not to solve the goal, but to assemble the context in which solving it becomes reliable.

3. Execute

At session start, the agent should receive a small orientation packet about project state. This provides a stable starting frame before goal-specific work begins.

Once a goal is selected, the orchestration layer resolves its current state, determines which context should be served, and delivers the goal-bound packet assembled during Refine. The purpose of this phase is not to rediscover what matters, but to apply the context already assembled for the goal.

When new information emerges — corrections, constraints, or discoveries — it must be captured so it can be reconciled into long-term memory before it is lost.

This is the central executive and top-down processing in action: selecting the active goal, constraining attention to what matters, and applying prior knowledge to ongoing work.

4. Review

The result is evaluated against the same goal context that guided execution. Did it meet the criteria? Did it respect the invariants? The goal is either approved or rejected with feedback.

This is the safety net for the "ish." Critics point to "hallucination" as a fatal flaw, but in a memory-driven system, failure is rarely a mystery — if Refine retrieves the wrong memory, then Execute fails deterministically. Review is the control process that catches that failure — validating the agent's work against the project's established memory.

Rejection is not failure in this model. It is signal. Issues should feed back into the system as memory, as subsequent goals or blockers on the current one.

5. Codify

This phase can be thought of like bookkeeping: update documentation, commit new components to memory, and record the changes to the CHANGELOG.

Each phase is a repeatable exchange. That makes every phase a candidate for a non-declarative skill definition — a protocol for using the system that can be encoded once and reused across every goal that passes through the cycle.

This is not a simplistic workflow. It is a cycle where each iteration leaves the system smarter than the last.

Structure Still Matters

Adding memory helps tremendously, but it's still important that your codebase is well structured and that you follow SDLC best practices.

Clean code, SOLID, separation of concerns, common closure, DDD, Clean Architecture, and employing established design patterns — these aren't ceremony. They make your solution legible to agents, not just humans.

Fortunately, you can bake them into your memory system when you have it in place. And you should. The Liskov Substitution Principle would have little to do with many frontend tasks, so it shouldn't be in AGENTS.md where it will show up as rot in every session. But it should be in long-term memory, retrievable when relevant to the goal at hand.

Working with agents is a new paradigm and it requires new skills. Keeping goals small, sessions independent, and reading the code before you ship it will all take you far.

There is much being written about the merits of these points. Still, I think it's worth emphasizing here, because memory is not a silver bullet. It compliments the software engineering principles and best practices that are essential to realizing good outcomes with agents.

Closing

We've covered a lot of ground here: the problem of agent amnesia, the cognitive model of memory, the design of a system to solve it, and how to use that system to create a functional memory cycle.

If nothing more, then I hope you take away these two key insights from this article:

- Agents are capable, but memory gives them continuity and makes them consistent.

- Memory is a system, not a feature. It's not just about giving agents access to more information. It's about providing mechanisms for retrieving, filtering, and binding that information in service of a goal.

I've deliberately left out implementation details. Each component can be a deep rabbit hole of design decisions and tradeoffs, and worthy of a complete article in its own right.

If you want to see one way to implement this, check out Jumbo — it's open source and designed for exactly this problem. Otherwise, I hope this article gives you a useful framework for thinking about building your own system.

About the Author

Josh Wheelock is an improbable software engineer. In his twenties, he toiled as a furniture maker and in other skilled trades before turning his attention to code. He is a solution architect and also the creator of the open source project Jumbo CLI — Memory and Context Management for Coding Agents. As a lifelong exploiter of punctuation, Josh found great utility in the em dash before the rise of LLMs, and continues to do so. He labored extensively over this article with AI manning the editorial desk.

Notes

- Long context retrieval degrades with poor placement. Liu et al. show that model performance often drops when relevant information appears in the middle of long contexts, even for explicitly long-context models. This supports the claim that larger instruction dumps can become less useful, not just more expensive.

- Conflicting knowledge is hard for models to resolve. Wang et al. argue that LLMs may detect that a conflict exists, but still struggle to pinpoint the conflicting segments and respond robustly when internal knowledge and prompt context disagree.

- Knowledge conflict is broader than staleness. Xu et al. survey context-memory, inter-context, and intra-memory conflict, which is a useful framing for understanding why accumulated prompt instructions become less trustworthy over time.

- Code generation shows a similar failure mode. Ashik et al. show that models continue producing outdated API usage patterns even when given updated documentation, which is a direct software-engineering example of stale internal knowledge competing with fresh external context.

- Memory helps only when retrieval is goal-directed. Weng's agent overview is useful here: memory, planning, and tool use are separate concerns. Long-term memory only helps if the system can retrieve and bind the right information for the current goal.

Reading List

- Corkin, Suzanne. Permanent Present Tense (2013)

- Kandel, Eric R. In Search of Memory (2006)

- "Working Memory — A Conversation with Dr. Alan Baddeley," Navigating Neuropsychology (2022, podcast)

- Liu, Nelson F., et al. Lost in the Middle: How Language Models Use Long Contexts (2023)

- Weng, Lilian. LLM Powered Autonomous Agents (2023)

- Wang, Yike, et al. Resolving Knowledge Conflicts in Large Language Models (2024)

- Xu, Rongwu, et al. Knowledge Conflicts for LLMs: A Survey (2024)

- Ashik, Ahmed Nusayer, et al. When LLMs Lag Behind: Knowledge Conflicts from Evolving APIs in Code Generation (2026)